Embedding Vector vs Standard Vector: The Mathematical Soul of Modern AI

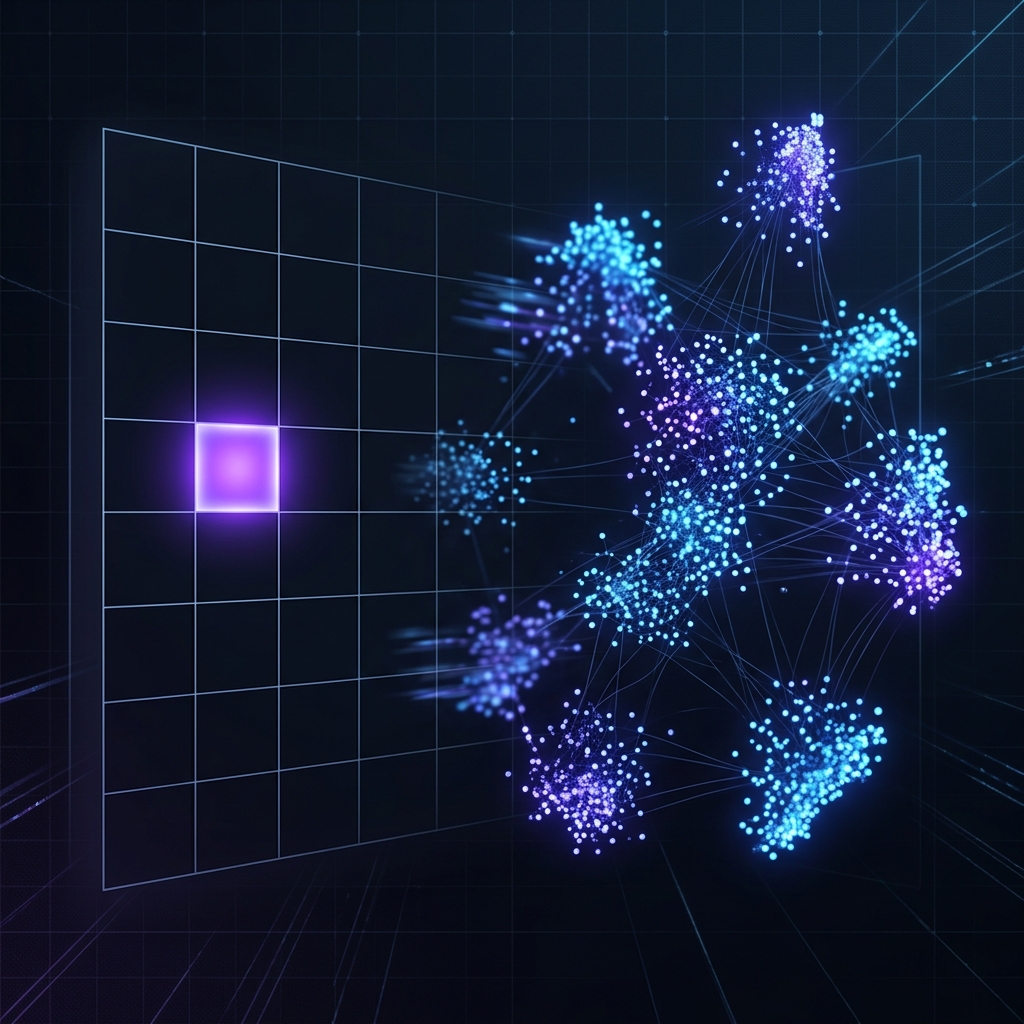

The fundamental abstraction in modern Artificial Intelligence is the Vector. Mathematically speaking, both an Embedding Vector and a Standard Vector are high-dimensional arrays of numerical values. Much like how a physical storage medium represents both a cinematic stream and a static text file as raw binary sequences, the underlying data structure remains identical.

However, the profound divergence lies in the inductive bias of the generation process and the latent semantic depth the resulting numbers encapsulate. This article formalizes the distinction between engineered feature spaces and learned semantic manifolds.

1. Feature Origin and Semantic Encoding

Standard Vector (Explicit & Human-Engineered)

- Origin: Explicitly defined via deterministic engineering or rule-based heuristics.

- Meaning: Each dimension maps bijectively to a specific, discrete, or countable physical attribute.

- Example: In a classical physics simulation, a vector for a rigid body might be , mapping to

[Mass, Velocity, Acceleration]. - Limitation: Discrete encoding methods like One-Hot Encoding create orthogonal vectors where the spatial distance between "Dog" and "Cat" is mathematically identical to the distance between "Dog" and "Object" . These vectors exhibit zero awareness of biological or operational commonalities.

Embedding Vector (Implicit & Model-Learned)

- Origin: Autonomous generation via gradient-based optimization within neural architectures (e.g., Transformers, Word2Vec).

- Meaning: Dimensions represent Latent Features—hidden characteristics inferred from the global distribution of the training corpus (e.g., "furriness," "regality").

- Semantic Continuity: Concepts with shared underlying properties are naturally clustered within the hyperspace. The distance metric (Euclidean) or angular proximity (Cosine Similarity) directly quantifies semantic relatedness.

2. Information Density: Sparse vs. Dense Topology

The computational efficiency of these vectors is governed by their sparsity.

- Sparse Representation (Standard): In traditional NLP (TF-IDF/One-Hot), a vocabulary of size requires -dimensional vectors. For a single token, entries are null (0). This results in massive memory overhead and computational redundancy without capturing inter-token relationships.

- Dense Representation (Embedding): Modern architectures project massive vocabularies into a compact latent space (typically 384 to 1536 dimensions). These vectors are "dense"—nearly every entry is a non-zero floating-point coefficient representing a specific fractional weight of semantic information.

3. The Phenomenon of Semantic Arithmetic

One of the most revolutionary breakthroughs in Embedding Theory is the emergence of linear semantic regularity. Because embeddings map concepts into a logically structured geometric manifold, mathematical operations correspond to logical transformations:

This confirms that the model has successfully encoded the "gender" vector as a consistent direction within the latent space. Similarly, geographical and relational hierarchies are preserved:

4. Scientific Summary Comparison

| Metric | Standard Vector | Embedding Vector |

|---|---|---|

| Generation | Hard-coded / Engineered | Learned / Gradient-Optimized |

| Logic | Explicit / Human-Readable | Implicit / Latent |

| Sparsity | High (Majority Zeros) | Low (Non-Zero Coefficients) |

| Geometry | Orthogonal / Discrete | Manifold / Continuous |

| Algebra | Value Comparison | Semantic Arithmetic |

[!TIP] Key Takeaway: Standard vectors are best for deterministic logic where absolute precision of individual attributes is required. Embedding vectors are the standard for understanding complex, high-dimensional relationships where meaning is derived from context and similarity.